Early in 2020, I got to work with a couple of amazing people on exploring what it would mean for policy makers to use the methods and techniques of human-centered design. Alberto Alvarez and Vivian Graubard led the way. Thanks to New America and the Beeck Center for support and publishing.

Author: Dana Chisnell

Story-driven experience research on pandemic unemployment

The COVID-19 pandemic shut downs and stay-at-home orders starting in March 2020 put millions of Americans out of work. On March 28, 2020, Congress passed the CARES Act, which, among other things, made billions of dollars available in new pandemic unemployment programs, but states struggled to implement and deliver these benefits. As of the end of July, 30 million people had applied for unemployment assistance, many of them for the first time, ever.

In my role with Project Redesign at NCoC.org, and in partnership with New America’s New Practice lab, I led a team of researchers to interview people from across the U.S. in May and June to learn what it has been like to apply for unemployment and other benefits during the pandemic.

Telling the story of living experts in near-real time

When Tara McGuinness contacted me about doing this project, she was ready to experiment with methods and techniques. She and her colleagues in the New Practice Lab at New America wanted to bring the experience people were having to life for law makers and advocates who usually make their decisions recommendations based on what they see in spreadsheets. We wanted to do a few big things with our approach.

Amplify voices and elevate stories of real people. Those real people are the experts on the experience they’re having — living experts. To convey the experience people had applying for unemployment and other benefits during the pandemic, we documented “thick data” in 2- to 4-page stories. The purpose of those stories was to make the people real for readers, who we hoped would be law makers and their staff members.

Urgently learn about the lived experience. The urgency came from 2 factors. One was that the depth of the needs changed over just a few weeks. As time passed, the experience claimants had shifted from applying to waiting for funds to show up. In the meantime, bills needed to be paid, savings were depleted, jobs disappeared permanently. The other consideration was that Congress would be drafting the next stimulus and recovery bills in May and June. We had a chance to get the stories to people who influenced the design of those bills.

Conduct the research in the open. Show the work. To invite everyone in. Those stories, along with a few slides that highlighted a story or two and key takeaways, went to partners on the project, community-based organizations, media, and to legislative staff on Capitol Hill. We had to give up being precious about how polished the deliverables were. They needed to be just good enough.

We had to give up being precious about how polished the deliverables were. They needed to be just good enough.

It was our intention to invite partners and community-based organizations and legislative staff to observe the interviews. Just scheduling the interviews and conducting them within the time we had proved to be pretty challenging, so we didn’t get to do that. I feel like we know how to do it now, and probably could pull it off for future research projects.

We did develop a research kit and a workbook for anyone who wants to do research like this. You can download the research plan, interview guide, and story template, along with a few other tools for free from the project website.

Share what we learned publicly, as we learned it. Typically, a research team would do the interviews, transcribe them, code the transcriptions, and analyze the coding to identify findings. As you can imagine, this would take a while — for 33 interviews, it could take months. We didn’t have months. We didn’t even have weeks.

As we captured what was working for claimants, what wasn’t, who helped them, who needed help, what time passing felt like, what else people had going on, we wrote up the interviews into stories within a day of doing the interview. We collected interviews together each week, and distributed those collections of 5 to 15 interviews each week.

We were not focused on making policy recommendations. Instead, we documented the stories and drew observations and insights from what we heard.

Doing experience research during a pandemic

There were more than the usual constraints on “field” research in May and June 2020. For example, we couldn’t actually go into the field. It wasn’t safe for the research team or the participants because anyone could be carrying COVID-19.

We also needed to hurry up and do this work because the benefits that were designed to help people were also scheduled to run out. Arranging home visits and traveling takes a lot of time. Congress had set time limits on the first stimulus and recovery bills. We wanted to use what we learned to help the people designing the next set of stimulus and recovery bills make good decisions.

So, it’s not like we could do ethnographic research, really. No access to living spaces. Not enough time. We went into the study thinking like ethnographers, but we needed a few shortcuts. So we borrowed an idea I learned from Kate Gomoll for the interview stories, and some techniques from collective story harvesting. So, we’re calling it experience research, and we relied on the living experts to help us begin to understand what they were going through. We used approaches lovingly borrowed from equity-centered community design, developed by Creative Reaction Lab.

Delivering stories, briefs, and a workbook

The 2- to 4-page stories we wrote after each interview, centering on a few focus questions, were our first deliverables, and we published them each week of the project as we completed interviews. Over 3 weeks, we conducted 33 interviews.

Next, using theme or lenses that we identified ahead of the interviews, we pulled observations and quotes from each interview and conducted some light synthesis. We used that synthesis to write briefs for each of the themes.

We wanted not only to convey insights from these interviews, but also to make the methods and techniques available to others. We hope that researchers in the public and private sectors will pick up inspiration, at least, from how we did the study. We also want to make it easy for organizations that don’t have professional researchers to learn what is happening with their constituents. Our research workbook and kit is available for free to anyone who wants to use them.

The links below open up PDFs. (Sorry about that. Soon we’ll have a beautiful website with fully accessible content.)

Overview

- Executive summary (slides)

- Collected stories: interviews with 33 people in the safety net

Themed briefs

- Context: Pandemic, race, and economy

- Successes of pandemic unemployment assistance

- Barriers and pain points of applying and getting assistance

- Relationships in the safety net: Families and help outside government

- Time spent applying and waiting for benefits

Doing research like this yourself

About the project

- Participants and methods

- Project mechanics

- Weekly collections of stories and slides from Stories in the Field

- Graphics

Full report — Stories, briefs, participants, methods, mechanics, team

What can go wrong when we get things right?

I think a lot about how design affects the world. And for a long time, I’ve been thinking about and studying how focusing on one user doesn’t always produce the outcomes we want. Humans are social creatures. We have relationships. Those relationships don’t fade or disappear when we’re interacting with a designed thing. They’re still present as influences.

This goes for users, but it also goes for designers. There’s no lone designer making all the decisions. Even if you’re a team of one, you’re interacting with any number of other people who at least influence the decisions you’re making. Those folks are often making design decisions, themselves.

All of those decisions interact. They originate in personal experiences, training, data, stories.

So, yes, understand your user’s needs. Deeply. Design for them. But look beyond that single user, and the best possible outcome. What happens for other people or organizations? Is it all good? Probably not. What are the ripple effects of getting things right for our core user?

Let’s look at some cases that I put together in a talk for the IA Conference in spring of 2020. Let me know what you think. (I’ll add captions and a transcript soon.)

For more on how data can be weaponized for domestic violence, read this post from Eva PenzyMoog, a UXer who studies this problem extensively.

Out of work in California because of Covid-19?

We want to learn from you what it’s like to go through the process of learning about and applying for unemployment assistance in California. We’re looking for folks living in California to spend 30 to 60 minutes with an interviewer, online.

We hope to learn what questions people have about the process of applying for unemployment assistance. We will use the information in this study to improve California government websites.

You must be at least 18 years old to take part in the study.

If you want to volunteer to be in this study, please answer these questions. (This is so we can determine whether you qualify and how to contact you if you do.)

We’ll need your email address to make an appointment for the study, but otherwise, we’re not collecting any personally identifying information.

This is independent research, which is part of Project Redesign at the National Conference on Citizenship in cooperation with the California Office of Digital Innovation. Your personal information will NOT be shared or reported. Your participation in this questionnaire and / or the study we’re conducting has no bearing on whether you get cash or other assistance from the state of California and if you do, how much.

Can we design a better ballot?

Every presidential election cycle, people get interested in why ballots are the way they are, so every few years, I give a talk about that. I’ve updated my talk about ballot design for 2020.

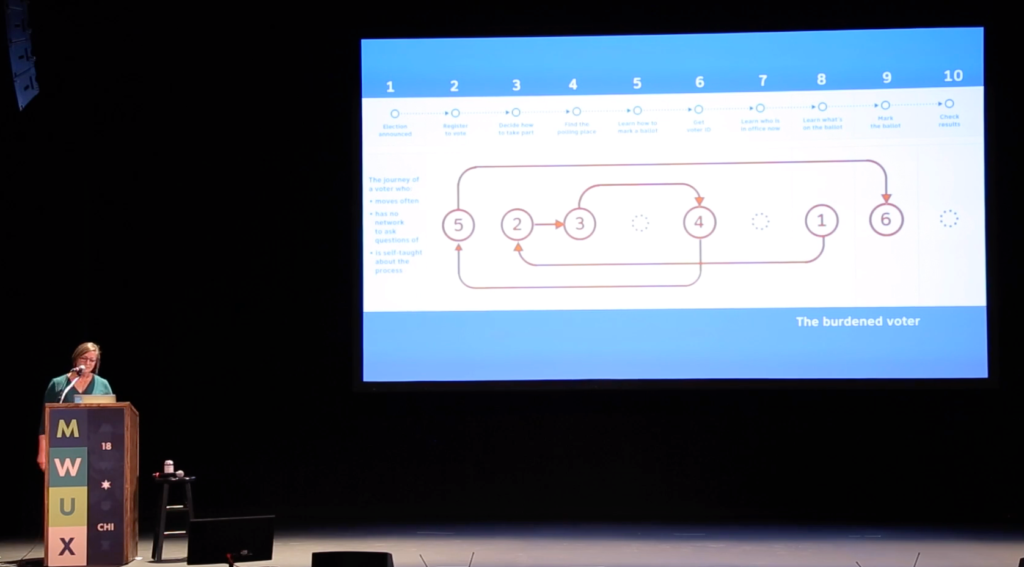

The epic journey of American voters

Every 4 years, I get a lot of requests to talk about design in elections from the UX and civic tech communities. Watch the talk at Midwest UX in 2018.

I’ve also written quite a bit about the hurdles that U.S. voters encounter, based on research I did before and while I was at the Center for Civic Design. There’s also a poster that you can download.

Transitions

Today, January 31, 2020, is my last day as co-director of the Center for Civic Design. For 6 years, I’ve been co-leading CCD with Whitney Quesenbery. My next adventure is as a founder-partner in a new civic incubator at the National Conference on Citizenship, a federally chartered non-profit based in Washington, DC.

I started doing work in election design in the early 2000s, when Whitney and I first worked together with an awesome collection of other fun folks on volunteer projects through a project of the then Usability Professionals Association (now User Experience Professionals Association). Through the last 2 decades, have become an expert on design in elections, advising and training election administrators all over the U.S. and Canada.

It was fun. And challenging. And it surprised me when I woke up one day in 2013 (after decades working in the private sector) as the co-head of a non-profit where I’d get to work on design to ensure voter intent every day.

Over my years working in election design, I designed and led research ranging from understanding poll workers’ attitudes about security in elections and their jobs in polling places, to mapping the gap between how local election officials think and the questions voters’ have. I led research on what became the Anywhere Ballot, usability of county and state election websites, how voters find and use information about elections, and where language access and acculturation is important for people with low English and low civics proficiency. I was the originator and the managing editor of the Field Guides To Ensuring Voter Intent.

I got to work with smart, mission-driven people who were excited about solving problems through design and who are curious about other human beings and their experiences. And I met and worked with thousands of election administrators who are some of the hardest-working and under-appreciated people in government. Together, over a long time, we incrementally made elections better.

I expect to continue talking about and working on understanding the journey of U.S. voters until I can’t talk anymore. Right now, I’m excited about moving to an adjacent space, one that I’ve been thinking about for a while: user-centered policy design.

Of course CCD goes on, with Whitney at the helm along with a great team working on helping jurisdictions implement better vote-by-mail, modernizing voter registration, and getting ready for new language access determinations. And, of course voting systems standards work!

For more information about the Center for Civic Design, contact hello@civicdesign.org. You can reach me at dana@ncoc.org, dana.chisnell@gmail.com, or on Twitter @danachis.

Delight resources

A key element of designing for delight is understanding where your product is in its maturity. One way to look at that is through the lens of the Kano Model. You can learn about the Kano Model and our addition of pleasure, flow, and meaning through a couple of sources:

Read Jared’s article on understanding the Kano Model (8-minute read on uie.com)

Watch Jared talk about the Kano Model (45-minute video)

Dana and Jared have both written about different aspects of delight. It’s not just about dancing hamsters. Delight is much more nuanced than that. The three key elements are pleasure, flow, and meaning.

Read Jared’s overview of pleasure, flow, and meaning. (10-minute read on uie.com)

Read Dana’s series at UX Magazine

- “Beyond frustration: three levels of happy design” (9-minute read)

- “Pleasant things work better” (8-minute read)

- “Beyond task completion: flow in design” (6-minute read)

Design can be used for good, or evil. Jared wrote about a technique that we use in our workshop that he calls “despicable design.” Going to the dark side can reveal a lot about how your team approaches designing its users’ experiences.

Read Jared’s article, “Despicable Design — When “going evil” is the perfect technique” (12-minutes at uie.com)

In our workshop, we also use sentiment words to help teams narrow down how they want people to feel or perceive a service. Here are the basics about sentiment analysis. And a piece from NNG about using the Microsoft Desirability Toolkit from which our use of sentiment words comes.

Framework for research planning

One of the tricks to making sure that I’ve designed the right study to learn what I need to learn is to tie everything together so I can be clear from the planning all the way through to the results report why I’m doing the study and what it is actually about. User research needs to be intentionally designed in exactly the same way that products and services must be intentionally designed.

What’s the customer problem?

It starts with identifying a problem that needs to be solved, and the contexts in which the problem is happening. This is a kind of meta research, I guess. From there, I can work with my team to understand deeply why we are doing the research at all, what the objective of the particular study is, and what we want to be different because we have done the research.

Why are you doing the study?

When the team shares understanding about why you’re doing the study and what you want to get out of it — along with envisioning what will be different because you will have done the study — forming solid research questions is a snap. You need research questions to set the boundaries of the study, determine what behaviors you want to learn about from participants, and what data you can reasonably collect in the constraints you have to answer your research questions.

What to do with the data: Moving from observations to design direction

This article was originally published on December 7, 2009.

What is data but observation? Observations are what was seen and what was heard. As teams work on early designs, the data is often about obvious design flaws and higher order behaviors, and not necessarily tallying details. In this article, let’s talk about tools for working with observations made in exploratory or formative user research.

Many teams have a sort of intuitive approach to analyzing observations that relies on anecdote and aggression. Whoever is the loudest gets their version accepted by the group. Over the years, I’ve learned a few techniques for getting past that dynamic and on to informed inferences that lead to smart design direction and creating solution theories that can then be tested.

Collaborative techniques give better designs

The idea is to collaborate. Let’s start with the assumption that the whole design team is involved in the planning and doing of whatever the user research project is.

Now, let’s talk about some ways to expedite analysis and consensus. Doing this has the side benefit of minimizing reporting – if everyone involved in the design direction decisions has been involved all along, what do you need reporting for? (See more about this in the last section of this article.)

Continue reading What to do with the data: Moving from observations to design direction